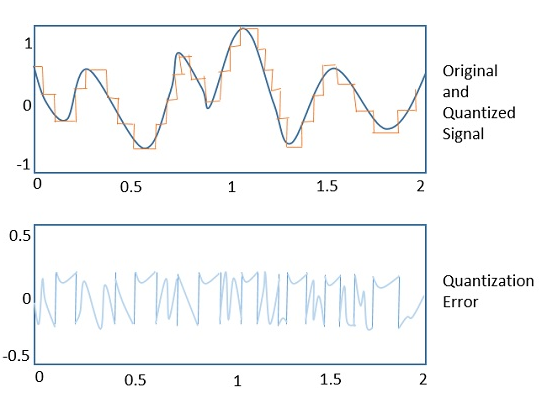

The first five scripts are based on mid-rise uniform quantization (even number of quantization levels) whereas the last two examples are based on a µ-law nonuniform quantization. These 7 MATLAB scripts (examples) use another 5 MATLAB functions; uniform_pcm.m, mulaw_pcm.m, mulaw.m, invmulaw.m, and signum.m. Lipschitz optimisation for Lipschitz interpolation. In 2017 American Control Conference (ACC 2017), Seattle, WA, USA. These databases are used effectively in collaborative environments for information extraction; consequently, they are vulnerable to security threats concerning ownership rights and data tampering.

The code The implementation of the color quantization via random palette selection is very easy. In the following snippet both the input variable raster and the output variable quantizedraster are numpy.ndarrays which correspond to the raster of the original image and the raster of the quantized image. They can be both be encoded with RGB or Lab (the output raster has the same encoding of the input) and have shape (width, height, 3). The code Here's the code to perform the color quantization through k-means.

The following function has the same signature of the one used for random selection, and what have been already discussed regarding raster loading, color space conversion, and result visualization still applies. Import numpy as np from sklearn import cluster def quantize(raster, ncolors): width, height, depth = raster.shape reshapedraster = np.reshape(raster, (width. height, depth)) model = cluster.KMeans(nclusters=ncolors) labels = model.fitpredict(reshapedraster) palette = model.clustercenters quantizedraster = np.reshape( palettelabels, (width, height, palette.shape1)) return quantizedraster Conclusions We can visibly notice that the usage of k-means outperforms the usage of simple random selection. Infact the images obtained with random selection with 64 colors are very similar to those obtained with k-means with 32 colors independently from the color space used. The drawback of using k-means is that it is obviously much slower especially if the training is performed with all the pixels of the original image.

This can be mitigated by using only a random sample of the pixels. On the other hand at first glance the results of k-means in RGB space and k-means in Lab space are similar. Anyway by taking a closer look at each couple of images, in some cases it is possible to notice some details that make us say that one is better than the other. In general for this particular image the face seems to be clearer and more defined when using RGB and this can be noticed especially in the first two couple of images. However using Lab space seems to render the reflection in the mirror and the hat better, and it also recalls the brightness of the original image. Overall I wouldn't say that when using k-means a color space is a better choice than the other, at least not for this particular image.

You could use combinations of the heaviside function. I should mention that sometimes H(x=0)=0.5. X=linspace(-1,5);% your signal to be quantized- could be anything y= a.heaviside(x-x1) + b.heaviside(x-x2) + c;% a, b and c decide the heights of your quantization% x1 and x2 decide the levels if you want a fourth level just use the following y= a.heaviside(x-x1) + b.heaviside(x-x2) + c.heaviside(x-x3) +e; The function is defined here: To quantize to k levels fun=a for k=1:n fun = fun+ h(i).heaviside(x-xi(k)) end fun=fun/normalization% normalization is a number to decide the level of your signal.